|

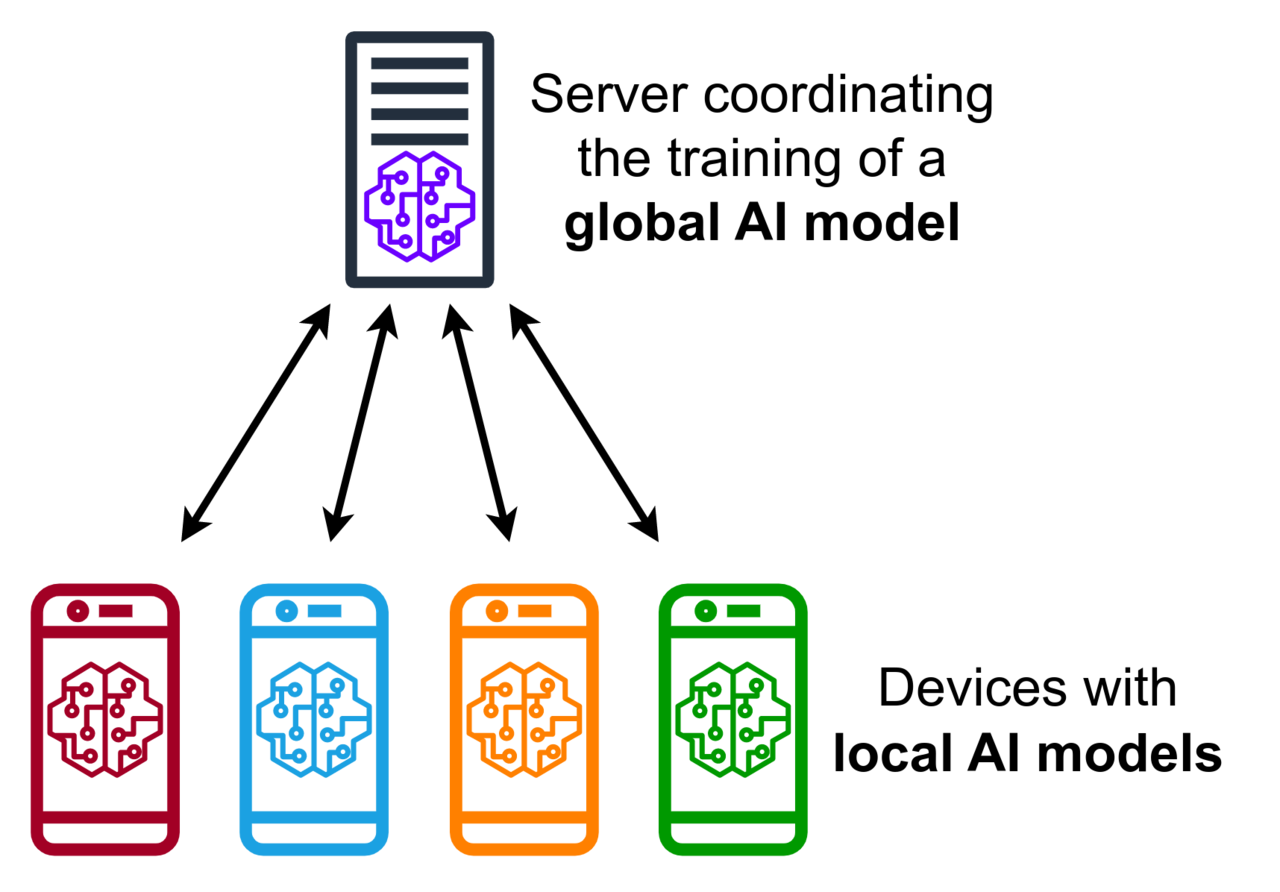

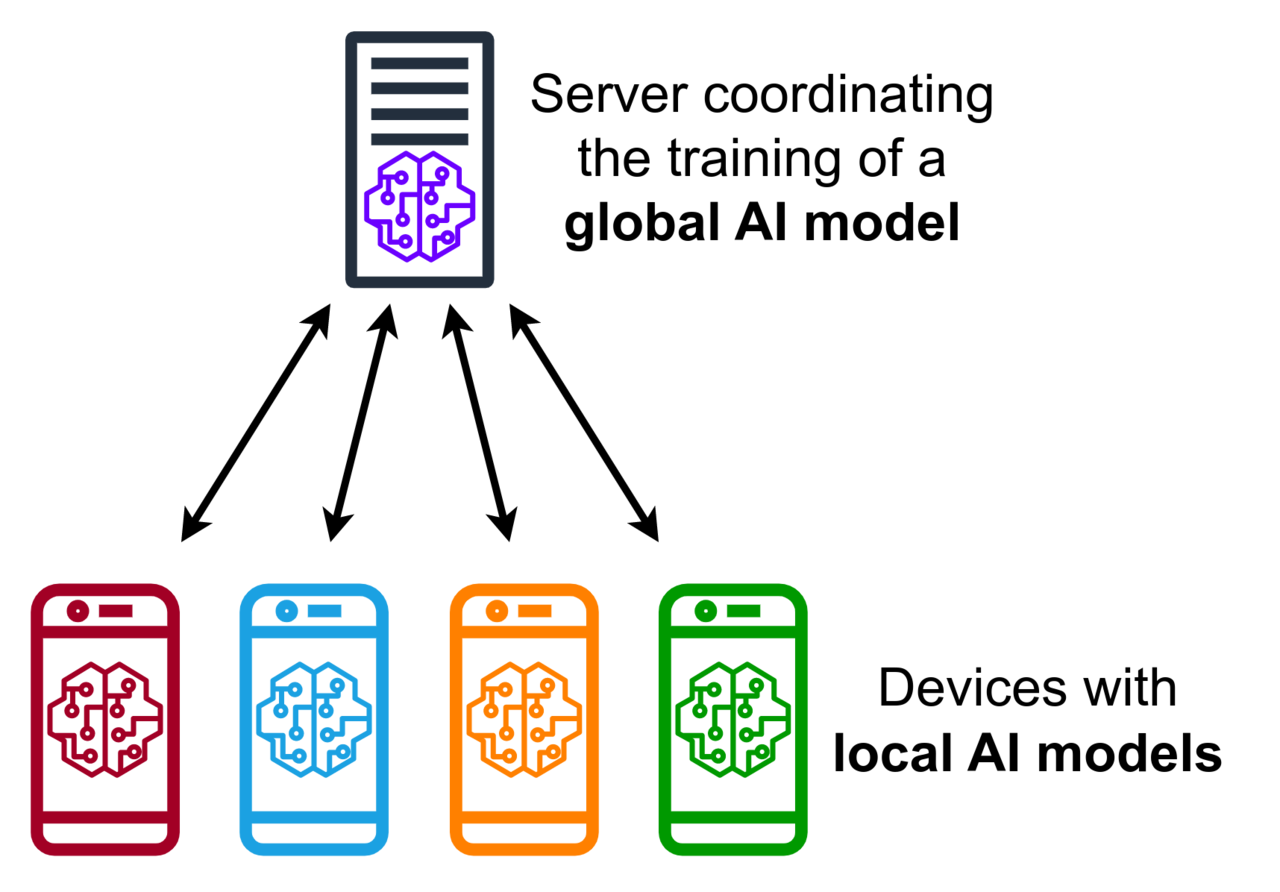

Federated learning has started to emerge as an important research topic in 2015[''Federated Optimization: Distributed Machine Learning for On-Device Intelligence,'' Jakub Konečný, H. Brendan McMahan, Daniel Ramage and Peter Richtárik, 2016] with the first publications on federated averaging in telecommunication settings. Before that, in a thesis work titled "A Framework for Multi-source Prefetching Through Adaptive Weight",[{{cite web | url=https://www.]academia.edu/8625512 | title=A Framework for Multi-source Prefetching Through Adaptive Weight | last1=Berhanu | first1=Yoseph }} an approach to aggregate predictions from multiple models trained at three location of a request response cycle with was proposed. Another important aspect of active research is the reduction of the communication burden during the federated learning process. In 2017 and 2018, publications have emphasized the development of resource allocation strategies, especially to reduce communication[{{cite arXiv|last1=Konečný |first1=Jakub |last2=McMahan |first2=H. Brendan |last3=Yu |first3=Felix X. |last4=Richtárik |first4=Peter |last5=Suresh |first5=Ananda Theertha |last6=Bacon |first6=Dave |title=Federated Learning: Strategies for Improving Communication Efficiency |date=30 October 2017 |class=cs.LG |eprint=1610.05492 }}] between nodes with gossip algorithms[''Gossip training for deep learning, Michael Blot and al., 2017''] as well as on the characterization of the robustness to [[differential privacy]] attacks.[''Differentially Private Federated Learning: A Client Level Perspective'' Robin C. Geyer and al., 2018] Other research activities focus on the reduction of the bandwidth during training through sparsification and quantization methods,[{{Cite journal|date=2021|title=Green Deep Reinforcement Learning for Radio Resource Management: Architecture, Algorithm Compression, and Challenges|journal=IEEE Vehicular Technology Magazine|language=en|volume=16|doi=10.1109/MVT.2020.3015184|s2cid=204401715|last1=Du|first1=Zhiyong|last2=Deng|first2=Yansha|last3=Guo|first3=Weisi|last4=Nallanathan|first4=Arumugam|last5=Wu|first5=Qihui|pages=29–39|hdl=1826/16378|hdl-access=free}}] for future federated learning and the need to compress deep learning, especially during learning.[{{Cite journal|date=2021|title=Random sketch learning for deep neural networks in edge computing|url=https://www.nature.com/articles/s43588-021-00039-6|journal=Nature Computational Science|language=en|volume=1}}] |

|

Federated learning has started to emerge as an important research topic in 2015[''Federated Optimization: Distributed Machine Learning for On-Device Intelligence,'' Jakub Konečný, H. Brendan McMahan, Daniel Ramage and Peter Richtárik, 2016] with the first publications on federated averaging in telecommunication settings. Before that, in a thesis work titled "A Framework for Multi-source Prefetching Through Adaptive Weight",[{{cite web | url=https://www.researchgate.net/publication/336578151_A_Framework_for_Multi-source_Prefetching_Through_Adaptive_Weight | title=A Framework for Multi-source Prefetching Through Adaptive Weight | last1=Alebachew | first1=Yoseph Berhanu |url-status=live|arxiv=2509.13604}}] an approach to aggregate predictions from multiple models trained at three location of a request response cycle with was proposed. Another important aspect of active research is the reduction of the communication burden during the federated learning process. In 2017 and 2018, publications have emphasized the development of resource allocation strategies, especially to reduce communication[{{cite arXiv|last1=Konečný |first1=Jakub |last2=McMahan |first2=H. Brendan |last3=Yu |first3=Felix X. |last4=Richtárik |first4=Peter |last5=Suresh |first5=Ananda Theertha |last6=Bacon |first6=Dave |title=Federated Learning: Strategies for Improving Communication Efficiency |date=30 October 2017 |class=cs.LG |eprint=1610.05492 }}] between nodes with gossip algorithms[''Gossip training for deep learning, Michael Blot and al., 2017''] as well as on the characterization of the robustness to [[differential privacy]] attacks.[''Differentially Private Federated Learning: A Client Level Perspective'' Robin C. Geyer and al., 2018] Other research activities focus on the reduction of the bandwidth during training through sparsification and quantization methods,[{{Cite journal|date=2021|title=Green Deep Reinforcement Learning for Radio Resource Management: Architecture, Algorithm Compression, and Challenges|journal=IEEE Vehicular Technology Magazine|language=en|volume=16|doi=10.1109/MVT.2020.3015184|s2cid=204401715|last1=Du|first1=Zhiyong|last2=Deng|first2=Yansha|last3=Guo|first3=Weisi|last4=Nallanathan|first4=Arumugam|last5=Wu|first5=Qihui|pages=29–39|hdl=1826/16378|hdl-access=free}}] for future federated learning and the need to compress deep learning, especially during learning.[{{Cite journal|date=2021|title=Random sketch learning for deep neural networks in edge computing|url=https://www.nature.com/articles/s43588-021-00039-6|journal=Nature Computational Science|language=en|volume=1}}] |

|

Recent research advancements are starting to consider real-world propagating [[Communication channel|channels]][{{cite journal |last1=Amiri |first1=Mohammad Mohammadi |last2=Gunduz |first2=Deniz |title=Federated Learning over Wireless Fading Channels |journal=IEEE Transactions on Wireless Communications |date=10 February 2020 |volume=19 |issue=5 |page=3546 |doi=10.1109/TWC.2020.2974748 |arxiv=1907.09769 |bibcode=2020ITWC...19.3546A }}] as in previous implementations ideal channels were assumed. Another active direction of research is to develop Federated learning for training heterogeneous local models with varying computation complexities and producing a single powerful global inference model. |

|

Recent research advancements are starting to consider real-world propagating [[Communication channel|channels]][{{cite journal |last1=Amiri |first1=Mohammad Mohammadi |last2=Gunduz |first2=Deniz |title=Federated Learning over Wireless Fading Channels |journal=IEEE Transactions on Wireless Communications |date=10 February 2020 |volume=19 |issue=5 |page=3546 |doi=10.1109/TWC.2020.2974748 |arxiv=1907.09769 |bibcode=2020ITWC...19.3546A }}] as in previous implementations ideal channels were assumed. Another active direction of research is to develop Federated learning for training heterogeneous local models with varying computation complexities and producing a single powerful global inference model. |

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0